By Serena Cheung, Quality Assurance Lead, Pureprofile

It’s never been more critical for businesses to have up-to-date and accurate data on consumer attitudes, sentiment and behaviours. And market research is the fastest and most effective way to uncover these potentially game-changing insights. So, when conducting online research, how can you be sure that the results are of the highest quality?

One of the biggest challenges faced by researchers is ensuring that the data they collect is accurate. For this reason, numerous quality assurance methods have been developed by experts over the years to ensure the collection of reliable data. It’s critical to find a research partner that is honest and transparent in their approach to quality assurance. Simple checks such as an ‘attention test’ can be put in place in order to stop unreliable data in its tracks.

When undertaking a research project it’s important to consider that not all attention tests are of equal effectiveness. This is why we’ve developed a unique approach that is proven to re-engage survey respondents and lead to better research results.

What is an attention test?

Let’s start with the basics. Over the past few decades countless businesses have enlisted the help of online research to fuel their decision making, and with this, grew the need for quality assurance. The attention test was created to “catch out” respondents who have not read the question properly and/or have not given accurate answers.

In a snapshot, an attention test is a question inserted mid-survey to ensure the respondent is still paying close attention to the responses they’re providing.

Isn’t that a trap question?

Well, yes – and no. A trap question is designed to trick respondents and ultimately leads to respondents who answered incorrectly being dismissed from the sample. On the other hand, our attention test gives respondents an opportunity to re-engage after potentially losing interest or being distracted. When looked at side by side, a trap question and attention test are very similar – but it’s our approach that differs.

However, there is always a chance that non-genuine respondents or fraudulent users are taking part in online surveys. Our unique approach to the attention test is thoughtfully designed to distinguish between these people and legitimate respondents. In the past, our failure rate of conventional attention tests was 18%, meaning many genuine respondents were missing out on having their say. Today we fail roughly 4% – with no loss in quality.

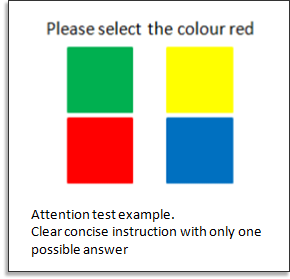

An example of one of our attention test questions:

When should an attention test be used?

We recognise that the vast majority of respondents are people with genuine intentions, hence we do not want to disrespect them by spamming them with multiple tests in every survey. Conversely, we want to deliver the best value for our clients by giving them high-quality data.

According to market research experts, the optimal survey length is 10 minutes1 – with the average attention span of an adult sitting at about 20 minutes2. It has been proven that survey takers become less motivated after the 10 minute mark. When this happens, respondents tend to put less cognitive effort into reading and answering the questions. Due to this known behaviour, we require an attention test to be included in any survey that exceeds 15 minutes.

Additional studies3 have found that respondents would sometimes ‘straight-line’ (answer the same option for a number of questions in a row) when presented with a large number of options on a likert scale or grid. Therefore, if a survey contains grid questions, we strongly recommend adding an attention test. Inserted strategically, the attention test is an excellent way to refocus the respondent and improve the quality of results.

How many times should an attention test be used in a single survey?

A recent study4 tested the effectiveness of one versus two attention tests in a single survey. The study found that although respondents might fail one of the tests, they were actually less likely to fail two. This suggests that when respondents are completing the survey, they may not be careful at a particular time – but that doesn’t necessarily mean that they lack care at other parts of the survey. With this in mind, we believe that two attention tests per survey provides a reliable outcome. If a respondent fails both attention tests, we can confidently disregard their responses.

Ensuring we receive high-quality data for clients, while still creating an enjoyable and engaging experience for our panel members, is our top priority. The attention test is just one of many measures we have carefully employed to guarantee the best outcomes for everyone.

Want to learn how to get the best research results? Get in touch with one of our data experts today.

1 Revilla, M., & Ochoa, C. (2017). Ideal and maximum length for a web survey. International Journal of Market Research, 59, pp. 557–565.

2 Cornish, David; Dukette, Dianne (2009). The Essential 20: Twenty Components of an Excellent Health Care Team. Pittsburgh, PA: RoseDog Books. pp. 72–73.

3 Herzog, A.R. & Bachman, J.G. (1981) Effects of questionnaire length on response quality.

Public Opinion Quarterly, 45, pp. 549–559.

Gummer, T., Roßmann, J. & Silber. T (2021) Using Instructed Response Items as Attention Checks in Web Surveys: Properties and Implementation. Sociological Methods & Research, 50, pp. 238-264.

4 Liu, M. & Wronski, L (2018) Trap questions in online surveys: Results from three web survey experiments. International Journal of Market Research, 60, pp. 32-49.